The graphics card industry has changed dramatically over the years, but many older technologies still played an important role in shaping modern computing. One of those technologies was ATI HyperMemory, a memory-sharing solution developed by ATI Technologies for budget graphics cards during the mid-2000s. At a time when dedicated video memory was expensive, this technology offered users a more affordable way to enjoy multimedia, casual gaming, and better graphical performance on home computers.

Many PC users from that era remember seeing graphics cards labeled with “HyperMemory” on the packaging without fully understanding what it meant. Some believed it increased graphics power automatically, while others thought it was simply a marketing term. In reality, the technology was a clever engineering solution designed to reduce manufacturing costs while still providing acceptable graphics performance for everyday users.

This article explores the complete background of ATI HyperMemory, including how it works, why it was introduced, its advantages and limitations, and how it compares to modern graphics memory systems.

Bio Table

| Field | Details |

|---|---|

| Keyword | ATI HyperMemory |

| Article Type | Informative Technology Article |

| Category | Technology |

| Sub Category | Graphics Cards / GPU Technology |

| Focus Topic | ATI HyperMemory Technology |

| Search Intent | Informational |

| Target Audience | Tech Users, Gamers, PC Enthusiasts, Students |

| Content Length | 1400+ Words |

| Keyword Usage | Natural & SEO Friendly |

| Tone | Professional and Human Written |

| SEO Type | On-Page SEO Optimized |

| Reading Level | Easy to Understand |

| Main Company | ATI Technologies |

| Related Brand | AMD |

| Related Technology | Shared GPU Memory Technology |

The Beginning of ATI HyperMemory

During the early 2000s, computer graphics technology was evolving rapidly. Games were becoming more demanding, multimedia applications were expanding, and operating systems required stronger graphical capabilities. At the same time, dedicated graphics memory was costly, which made high-performance graphics cards expensive for average consumers.

To solve this issue, ATI Technologies introduced ATI HyperMemory around 2004. The goal was simple: create low-cost graphics cards that could still provide reasonable performance for regular users without requiring large amounts of onboard video memory.

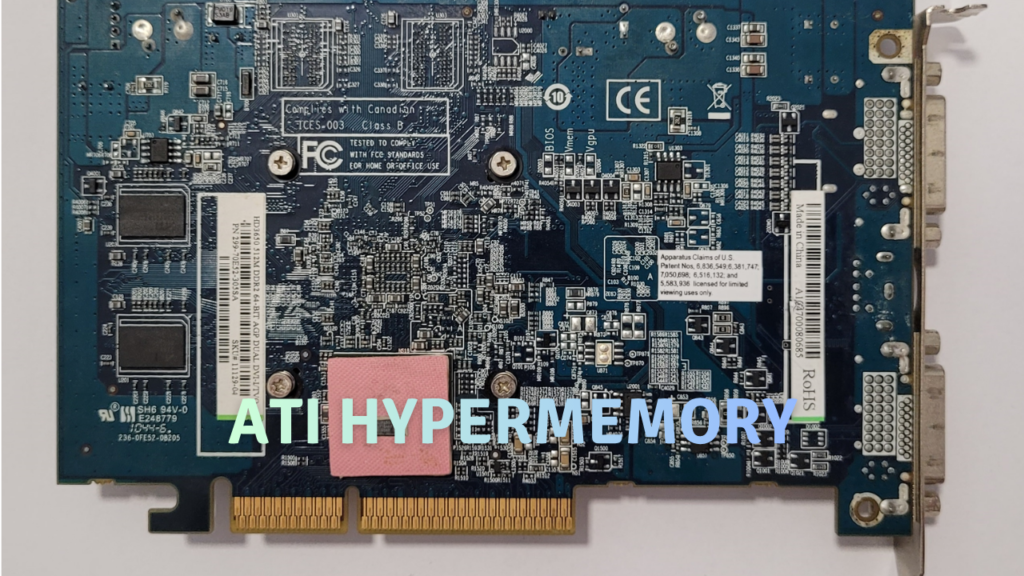

The technology appeared mostly in entry-level graphics cards from the Radeon series. Instead of placing large memory chips directly on the graphics card, manufacturers could install a smaller amount of dedicated memory and allow the GPU to borrow additional memory from the computer’s system RAM when necessary.

This helped reduce production costs while keeping graphics cards affordable for students, office users, and casual gamers.

What Is ATI HyperMemory?

In simple terms, ATI HyperMemory is a graphics memory management technology that allows a GPU to use part of the computer’s main RAM as extra video memory.

Normally, a graphics card uses its own dedicated VRAM to process textures, images, and graphical calculations. Dedicated VRAM is faster because it is physically connected to the graphics processor. However, dedicated memory increases the overall cost of the graphics card.

With HyperMemory, the GPU combines:

- Small onboard video memory

- Shared system RAM

The graphics card dynamically accesses system memory whenever additional graphical resources are needed. This approach reduced hardware costs and allowed manufacturers to create budget-friendly GPUs with lower amounts of physical VRAM.

For example, a graphics card may contain only 64MB of dedicated memory but advertise support for 256MB through shared system memory.

How the Technology Works

The operation of ATI HyperMemory depended heavily on the PCI Express interface, which was relatively new during that period. PCI Express provided faster communication between the motherboard and graphics card compared to older AGP technology.

When an application required more memory than the onboard VRAM could provide, the graphics processor automatically allocated a portion of the system RAM. The operating system and drivers managed this process in the background, meaning users usually did not need to configure anything manually.

The GPU prioritized dedicated VRAM for high-speed operations while using shared memory for less critical data. This helped maintain acceptable performance for light gaming, video playback, and general desktop usage.

Although system RAM was slower than dedicated VRAM, the improved PCI Express bandwidth made the technology practical for entry-level systems.

Graphics Cards That Used HyperMemory

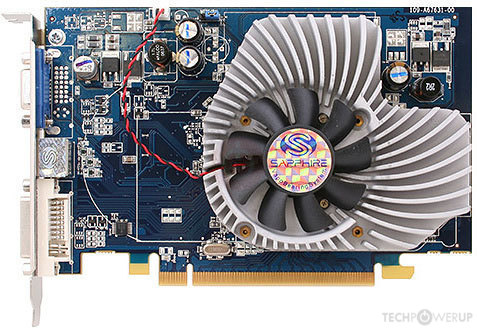

Several Radeon graphics cards featured this memory-sharing technology. These GPUs targeted mainstream and budget computer users rather than enthusiasts.

Popular examples included:

- ATI Radeon X300 HyperMemory

- ATI Radeon X550 HyperMemory

- ATI Radeon X1300 HyperMemory

- ATI Radeon HD 2400 Pro HyperMemory

These cards were commonly found in affordable desktop computers from companies such as Dell and HP.

Most of them were designed for:

- Office computing

- DVD and media playback

- Internet browsing

- Educational systems

- Older PC games

They were not intended for high-end gaming or professional graphics workloads.

Advantages of ATI HyperMemory

One reason ATI HyperMemory became popular was its ability to make dedicated graphics cards more affordable. During that period, even low-end dedicated GPUs could be expensive for budget PC builders. HyperMemory offered a middle ground between integrated graphics and full-performance gaming cards.

Another important benefit was lower power consumption. Since many HyperMemory cards included smaller memory modules, they often consumed less electricity and produced less heat. This made them suitable for compact desktop systems and office environments.

The technology also improved multimedia experiences compared to many integrated graphics solutions available at the time. Watching videos, running Windows visual effects, and playing lightweight games generally felt smoother on HyperMemory-enabled graphics cards.

For casual users, the difference was noticeable. A basic computer with a HyperMemory GPU often handled graphical tasks better than systems relying entirely on motherboard-integrated graphics.

Limitations and Performance Issues

Despite its advantages, ATI HyperMemory had several limitations that prevented it from competing with true gaming graphics cards.

The biggest drawback was speed. System RAM is much slower than dedicated VRAM because it is shared with the CPU and accessed through the motherboard. When games or applications relied heavily on shared memory, performance could drop significantly.

Another issue involved memory availability. Since the graphics card borrowed part of the computer’s RAM, the system had less memory available for normal tasks. On computers with only 512MB or 1GB of RAM, this could noticeably affect overall performance.

Gaming limitations were also clear. Modern 3D games of that era often required more graphical power and memory bandwidth than HyperMemory cards could deliver. Users could play older or less demanding titles, but newer games struggled at higher resolutions or detail settings.

Some marketing confusion existed as well. Certain graphics cards advertised large memory capacities, such as 256MB or 512MB, even though only a small portion was physically installed on the card itself.

Comparison With NVIDIA TurboCache

Around the same period, NVIDIA introduced a competing technology called NVIDIA TurboCache.

Both systems shared a similar concept:

- Reduce dedicated memory costs

- Use system RAM as supplemental graphics memory

- Improve affordability for entry-level graphics solutions

However, differences existed in implementation and driver optimization. Some users preferred ATI’s image quality and multimedia performance, while others favored NVIDIA’s driver support and gaming compatibility.

The competition between these technologies reflected the growing demand for low-cost graphics solutions during the mid-2000s PC market.

Impact on Budget Computing

The release of ATI HyperMemory helped bridge the gap between integrated graphics and expensive dedicated GPUs. Many consumers who could not afford premium graphics cards still gained access to better multimedia performance and improved graphical capabilities.

For students and office users, the technology offered enough power for daily computing tasks without significantly increasing PC costs. Internet cafes, schools, and small businesses also benefited from affordable graphics solutions during that era.

In many ways, HyperMemory represented an important transition period in graphics hardware development. It demonstrated how shared-memory concepts could improve user experiences even on low-cost systems.

How Modern Graphics Still Use Similar Ideas

Although the HyperMemory branding disappeared years ago, the core concept behind it still exists today.

Modern integrated graphics processors from Intel and AMD commonly use shared system memory instead of dedicated VRAM. Current APUs and integrated GPUs dynamically allocate RAM based on workload requirements, much like HyperMemory did in the past.

The difference is that modern systems use significantly faster memory technologies and improved architectures. As a result, shared-memory graphics solutions today perform far better than early implementations from the 2000s.

Unified memory designs in modern laptops, tablets, and compact devices also follow similar principles by allowing processors and graphics units to access the same memory pool efficiently.

Why ATI HyperMemory Matters Today

Technology enthusiasts and retro PC collectors still discuss ATI HyperMemory because it represents an important chapter in graphics hardware history. It showed how manufacturers balanced affordability and performance during a period of rapid computing growth.

For retro gaming systems and vintage computer builds, HyperMemory graphics cards remain interesting examples of budget engineering solutions from the PCI Express transition era.

They also serve as reminders of how much graphics technology has advanced. Features once considered innovative for entry-level systems are now standard in many modern integrated graphics processors.

Final Thoughts

ATI HyperMemory was not designed to compete with premium gaming graphics cards, but it successfully achieved its main purpose: providing affordable graphics performance for mainstream users. By combining small amounts of dedicated VRAM with shared system memory, ATI created a practical solution for budget PCs during the mid-2000s.

The technology helped reduce hardware costs, improved multimedia experiences, and allowed millions of users to enjoy better graphics without purchasing expensive GPUs. While its performance limitations prevented it from becoming a long-term high-end solution, the ideas behind HyperMemory still influence modern graphics architectures today.

For anyone exploring the history of computer graphics technology, HyperMemory remains an important innovation that demonstrated how creative engineering could make better graphics accessible to a wider audience.

FAQs

Q: What is ATI HyperMemory?

A: It is a graphics technology that allows a GPU to use system RAM as additional video memory.

Q: Was ATI HyperMemory good for gaming?

A: It worked well for older and casual games but struggled with demanding modern titles.

Q: Does HyperMemory use system RAM?

A: Yes, it dynamically borrows part of the computer’s RAM for graphics tasks.

Q: Is ATI HyperMemory still used today?

A: The branding is gone, but modern integrated GPUs use similar shared-memory concepts.

Q: Which company created HyperMemory?

A: ATI Technologies developed the technology before being acquired by AMD.